Every VP of Operations in a regulated contact center has heard the same pitch at least a dozen times in the last two years: "Automate everything. AI will handle it." The deck is usually compelling. The pilot usually works. And then the first real escalation comes in — a distressed borrower two payments behind, a debt-relief client who hasn't opened an email in a month, an insurance policyholder who just received a denial — and the AI produces something technically correct and emotionally catastrophic.

The backlash, predictably, lands on the wrong lesson. Teams either retreat entirely ("we're not ready for AI") or double down indiscriminately ("we just need better prompts"). Both miss the point. The real question was never whether to automate. It was always what.

In regulated contact center environments — debt relief, consumer lending, BPO, insurance — that question carries consequences that go beyond a poor customer experience. An automated touchpoint that violates a state-specific disclosure requirement isn't just bad CX. It's a compliance event. A robotic IVR that misroutes a vulnerable customer in financial distress isn't a workflow inefficiency. It's reputational damage with regulator-facing liability attached.

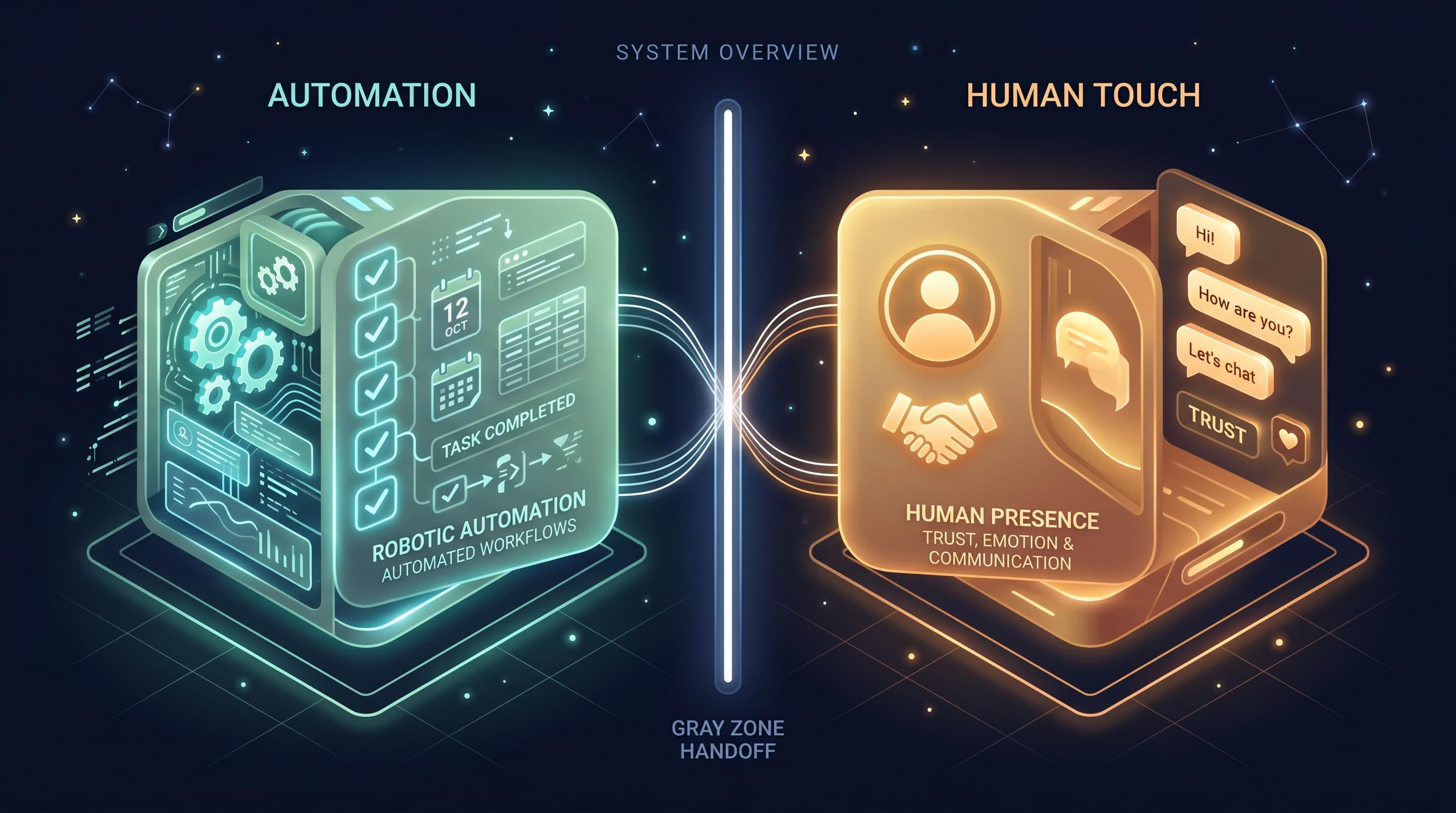

The principle we've converged on, and the one that has survived contact with actual enterprise operations, is straightforward: automate the process, not the relationship. But a principle without a decision framework is just a bumper sticker. This playbook is the framework.

- The automation question is a placement question. Every sales and service task can be mapped to one of four quadrants based on how repetitive and emotionally weighted it is.

- Regulated contact centers have a narrower automation window. Compliance requirements, licensed-agent rules, and vulnerable-customer obligations all constrain which quadrants you can operate in with AI alone.

- AI-assisted is not the same as AI-owned. The most powerful operating mode is human-led, AI-accelerated: a licensed negotiator on the call with AI surfacing context, compliance flags, and next-best-action in real time.

- The uncanny valley is real and costly. Automation that feels almost human but isn't triggers trust collapse — especially in high-stakes financial conversations.

- Your 2027 competitive moat won't be whether you automated. It'll be the quality of what you kept human.

The question isn't whether. It's what.

Most contact centers today are not under-automated — they're mis-automated. They've deployed automation where it's visible and easy: IVR trees, drip sequences, chatbots at the top of the funnel. But they've left the highest-leverage work — negotiation, exception-handling, compliance — locked behind human overhead. The cost usually shows up in escalation rates, cycle time, abandoned attempt-rates, and revenue-per-touch that doesn't move the needle.

The bottleneck, predictably, lands on the wrong lesson. Teams either retreat entirely into maintaining status quo or double-down on broader AI integration and fail — sometimes repeatedly — because the integration isn't aligned with the underlying contact center operations.

In a regulated contact center environment — debt relief, consumer lending, BPO, insurance — that question is particularly fraught. An automated touchpoint that violates a state-specific disclosure requirement isn't just bad CX. It's a compliance event. A robotic IVR that misroutes a vulnerable customer in financial distress isn't a workflow inefficiency. It's reputational damage with regulator-facing liability attached.

The Automation Quadrant

The inverse is the opportunity. The single biggest unlock in contact center operations is learning to distinguish between the things that can be reliably automated and the moments where human intervention returns leverage that's not captured in any per-call metric. To draw that line, map every interaction across two dimensions:

- Repetitive → Creative: Is the task always the same (collect name, verify account), or does it require judgment about what to do next?

- Transactional → Emotional: Is the customer trying to accomplish a task, or are they in a state of distress where the relationship matters as much as the resolution?

Plot every interaction on that 2×2 grid, and you get four quadrants. Each quadrant maps to a different operating model:

Automate Fully

Repetitive + Transactional

- Data enrichment & CRM hygiene

- Scheduled compliance notifications

- Account verification & status checks

AI-Assisted

Creative + Transactional

- Next-best-action routing

- Outcome suggestions & scripts

- Compliance rule checking

Human-Led, AI-Tracked

Repetitive + Emotional

- Status updates with humans handling objections

- AI monitors for compliance violations

- Real-time escalation to supervisor if needed

Keep It Human

Creative + Emotional

- Negotiation & settlement discussions

- Vulnerable customer support

- Complex dispute resolution

What to automate

The top-left quadrant — repetitive, transactional — is your automation sandbox. Data entry, format verification, compliance checking, scheduled notifications, account status lookups. All safe. All high-leverage when done at scale. This is where the efficiency gains live.

What to never automate

The bottom-right quadrant — creative, emotional — is where automation breaks and costs money. Negotiation with a borrower who's facing a garnishment. Settlement discussions with a customer who just learned they're in collections. Appeals processes where the outcome depends on tone, credibility, and understanding. The moment you automate this, you've moved from efficiency to liability.

The AI qualifier: The regulator closes. The mistake is asking the AI to do both — to build trust and execute a decision framework. It doesn't work.

The uncanny valley of sales

The real failure mode in regulated contact center automation isn't a complete AI takeover. It's the middle ground: bots that sound almost human, workflows that feel almost right, systems that make the customer work harder than they should have to. This is the uncanny valley, and it's expensive.

A customer who knows they're talking to a bot has one set of expectations. A customer who thinks they're talking to a human and discovers otherwise has another. Trust collapse is the outcome, followed by escalation, repeat contact, negative word-of-mouth, and exposure to bad-faith complaints.

Human-led, AI-accelerated: the operating mode

The unrealized advantage in 2025 is not "replace the agent." It's: "make the licensed human better." A licensed negotiator on a call with real-time AI context — case history, compliance flags, next-best-action suggestions, competitor offers, settlement ranges — doesn't become redundant. They become more valuable.

Every regulated vertical has moments where AI adds real value:

- Pre-call briefing, automated: Before a licensed regulator picks up a settlement call, AI surfaces the account's payment history, prior expresss written agreements, and suggested negotiation lanes.

- Real-time compliance assist: During a call, AI listens and flags moments where a disclosure requirement kicks in, a state-specific rule applies, or a vulnerable-customer protocol needs activation.

- Post-call documentation, automated: Once the call ends, AI generates a summary, updates the compliance log, triggers the appropriate next workflow step, and surfaces any hand-offs needed.

That's the real unlock. Not replacement. Acceleration. A licensed agent who spends less time on status checks and more time on negotiation is going to close more deals, keep better time on the phone, and produce compliance-grade documentation that doesn't require a second pass by a paralegal.

Where this goes next

The automation frontier in regulated contact centers is moving in two directions simultaneously. On one half of the quadrant, AI is getting smarter about detection, so the customer centre that has a unified data layer will have competitive advantage in 2027 are the ones that automated correctly at each step and leveraging human intent is missing in the competitive advantage in 2027 are the ones that automated correctly each stage and what keeps differentiating is the quality of the human work.

The principle holds across every regulated vertical: automate the process, not the relationship. The relationship is where your competitive moat lives.

FAQ

Can AI handle inbound calls in regulated contact centers at all?

Yes — specifically in the top half of the quadrant. AI agents are well-suited to initial intake-confirming identity, verifying account status, collecting baseline information, collecting settlement expressions about next steps. The constraint isn't capability. It's regulation. In the U.S., TCPA-regulated verticals require an express-written consent under TCPA, and the FCC has treated AI as failing under the 'artificial or prerecorded voice' category. Penalties run up to $43,280 per call. Internatioinal calls (e.g., appointment-reminders to existing customers) have different rules, but always assume: if a licensed agent could land penalties, AI does too.

How do you handle the handoff from AI to human without context loss?

This is the architecture question that determines whether your AI investment pays off. The handoff has to be synchronous and composable. CRM integrations do it. Middleware layers do it. Contact center work-queuing over websocket, CDR enrichment, and full-context history aren't novel, but they're the difference between a 5-minute context-recovery period for a human rep and a handoff where there's one customer experience. Context loss at handoff is the single most common reason AI-assisted programs end up on operator-facing liability attached.

What about outbound AI voice agents for debt collection?

This is one of the highest-scrutiny areas in the space. CFPB guidance, state collection laws, and individual consent requirements all intersect. A CPR guideline, state collection laws, and individual consent requirements that intersect. A CFPB guideline, state collection laws, and individual consent requirements that intersect. A CPMG guideline, state collection laws, and individual consent requirements that intersect. A CRM placement can circumvent calls for payments and past due. Once an account is in active collection, the licensed agent has to review the outcome before creating contact. Handled, you unlock compliance. Missed, and you're on the wrong side of a UDAP verdict.

How do you measure whether your automation is in the right quadrant?

These metrics tend to be diagnostic. Escalation rate from automated touchpoints — if AI-handled contacts escalate frequently, one of two things happened. Either the AI misclassified the request, or you put a conversational task in the wrong quadrant. Customer effort score on AI-handled contacts — if customers are working harder on automated flows, that's a sign you're trying to automate something that shouldn't be. Repeat contact rate: If more than a small single-digit percentage of resolved interactions generate a follow-up contact on the same issue, your customer record isn't really unified — no matter what your architecture diagram says.

Does this model require replacing existing technology?

Not immediately. The quadrant framework is a technology-agnostic — you can apply it to your current stack to identify where automation is producing value and where it's causing friction. If your tools and your agent tools are talking through a middleware layer rather than natively integrated, you've got configuration work, not a replacement project. The question is whether your systems can sync customer context, handle asynchronous handoff between AI and human agents, and log the outcomes in a queryable way where the productivity gains show up. If all that existing infrastructure can do that, you're good. You're not. You've got context loss problems at handoff that are architectural, not configurational.

What's the most common mistake teams make when starting?

Automating the visible tasks first. Outbound-inbound expresses and IVR trees are the highest-leverage areas in the space, there nonverbal communication is often invisible, especially email, chatbot, or multichannel. Early pilots often succeed because they're built on high-repetition, low-stakes flows. Then teams try to expand the same success across the entire customer lifecycle, and it breaks. The lesson isn't 'we need better AI'. It's 'we tried to automate something that wasn't actually repetitive or low-stakes'. Start with the infrastructure question: Can your platform handle synchronous handoffs between AI and human? Can it merge context across silos? Can it track what happened on a call (for compliance and performance)? Get that working first. Once you have observability, the automation decisions become obvious.